Algorithmic approaches for assessing pollution reduction policies can reveal shifts in environmental protection of minority communities, according to Stanford researchers

Applying machine learning to a U.S. Environmental Protection Agency initiative reveals how key design elements determine what communities bear the burden of pollution. The approach could help ensure fairness and accountability in machine learning used by government regulators.

The perils of machine learning – using computers to identify and analyze data patterns, such as in facial recognition software – have made headlines lately. Yet the technology also holds promise to help enforce federal regulations, including those related to the environment, in a fair, transparent way, according to a new study by Stanford researchers.

Go to the web site to view the video.

The analysis, published this week in the proceedings of the Association of Computing Machinery Conference on Fairness, Accountability and Transparency, evaluates machine learning techniques designed to support a U.S. Environmental Protection Agency (EPA) initiative to reduce severe violations of the Clean Water Act. It reveals how two key elements of so-called algorithmic design influence which communities are targeted for compliance efforts and, consequently, who bears the burden of pollution violations. Such analyses are particularly timely given recent executive actions calling for renewed focus on environmental justice.

“Machine learning is being used to help manage an overwhelming number of things that federal agencies are tasked to do – as a way to help increase efficiency,” said study co-principal investigator Daniel Ho, the William Benjamin Scott and Luna M. Scott Professor of Law at Stanford Law School. “Yet what we also show is that simply designing a machine learning-based system can have an additional benefit.”

Pervasive noncompliance

The Clean Water Act aims to limit pollution from entities that discharge directly into waterways, but in any given year, nearly 30 percent of such facilities self-report persistent or severe violations of their permits. In an effort to halve this type of noncompliance by 2022, EPA has been exploring the use of machine learning to target compliance resources.

To test this approach, EPA reached out to the academic community. Among its chosen partners: Stanford’s Regulation, Evaluation and Governance Lab (RegLab), an interdisciplinary team of legal experts, data scientists, social scientists and engineers that Ho heads. The group has done ongoing work with federal and state agencies to aid environmental compliance.

In the new study, RegLab researchers examined how permits with similar functions, such as wastewater treatment plants, were classified by each state in ways that would affect their inclusion in the EPA national compliance initiative. Using machine learning models, they also sifted through hundreds of millions of observations – an impossible task with conventional approaches – from EPA databases on historical discharge volumes, compliance history and permit-level variables to predict the likelihood of future severe violations and the amount of pollution each facility would likely generate. They then evaluated demographic data, such as household income and minority population, for the areas where each model indicated the riskiest facilities were located.

Devil in the details

The team’s algorithmic process helped surface two key ways that the design of the EPA compliance initiative could influence who receives resources. These differences centered on which types of permits were included or excluded, as well as how the goal itself was articulated.

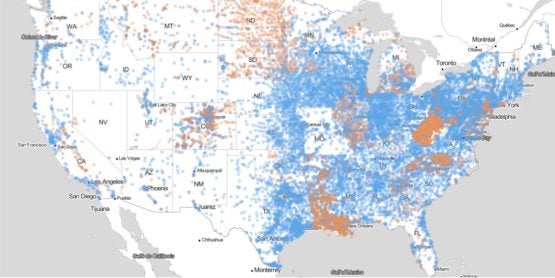

Map of U.S. wastewater treatment facilities with general permits (orange) intended to cover multiple dischargers engaged in similar activities and individual permits (blue) that cover a specific facility. Individual states assign the permits based on different classifications. A national regulatory initiative to reduce pollution in waterways would not apply to general permits initially, leaving out approximately 40 percent of all wastewater treatment facilities. (Image credit: Benami, et al.)

In the process of figuring out how to achieve the compliance goal, the researchers first had to translate the overall objective into a series of concrete instructions – an algorithm – needed to fulfill it. As they were assessing which facilities to run predictions on, they noticed an important embedded decision. While the EPA initiative expands covered permits by at least sevenfold relative to prior efforts, it limits its scope to “individual permits,” which cover a specific discharging entity, such as a single wastewater treatment plant. Left out are “general permits,” intended to cover multiple dischargers engaged in similar activities and with similar types of effluent. A related complication: Most permitting and monitoring authority is vested in state environmental agencies. As a result, functionally similar facilities may be included or excluded from the federal initiative based on how states implement their pollution permitting process.

“The impact of this environmental federalism makes partnership with states critical to achieving these larger goals in an equitable way,” said co-author Reid Whitaker, a RegLab affiliate and 2020 graduate of Stanford Law School now pursuing a PhD in the Jurisprudence and Social Policy Program at the University of California, Berkeley.

Second, the current EPA initiative focuses on reducing rates of noncompliance. While there are good reasons for this policy goal, the researchers’ algorithmic design process made clear that favoring this over pollution discharges that exceed the permitted limit would have a powerful unintended effect. Namely, it would shift enforcement resources away from the most severe violators, which are more likely to be in densely populated minority communities, and toward smaller facilities in more rural, predominantly white communities, according to the researchers.

“Breaking down the big idea of the compliance initiative into smaller chunks that a computer could understand forced a conversation about making implicit decisions explicit,” said study lead author Elinor Benami, a faculty affiliate at the RegLab and assistant professor of agricultural and applied economics at Virginia Tech. “Careful algorithmic design can help regulators transparently identify how objectives translate to implementation while using these techniques to address persistent capacity constraints.”

Ho is also a professor of political science in Stanford’s School of Humanities and Sciences, senior fellow at the Stanford Institute for Economic Policy Research (SIEPR), associate director of the Stanford Institute for Human-Centered Artificial Intelligence and faculty fellow at the Center for Advanced Study in the Behavioral Sciences. Co-authors of the study include Hongjin Lin, a research fellow at Stanford Law School; Brandon Anderson, head of data science in Stanford’s RegLab; and Vincent La, a data scientist in Stanford’s RegLab.

The research was supported by the Stanford Woods Institute for the Environment and Schmidt Futures.

To read all stories about Stanford science, subscribe to the biweekly Stanford Science Digest.