Stanford’s Robot Makers: Mark Cutkosky

Mark Cutkosky is the Fletcher Jones Chair in the School of Engineering and a professor of mechanical engineering at Stanford University. His lab focuses on biomimetic engineering – robots and technologies that take inspiration from nature – and improving robots’ abilities to interact with the physical world. This Q&A is one of five featuring Stanford faculty who work on robots as part of the project Stanford’s Robotics Legacy.

What inspired you to take an interest in robots?

Mark Cutkosky as a graduate student at Carnegie Mellon University, working on his first robotics project. (Image credit: AP News)

I became involved in robotics in the early 1980s when I started graduate school at Carnegie Mellon University in Pittsburgh. I had no prior experience or special interest in robotics. However, because I had experience from industry designing manufacturing equipment, I was attached to a new project on a robotic manufacturing cell. I discovered that I liked robotics and stayed beyond my master’s degree for a PhD.

What was your first robotics project?

My first projects (circa 1982) involved designing a gripper for grasping turbine blades (shown in image) and an instrumented force-sensing compliant wrist. The idea was to “tune” the wrist to different levels of stiffness depending on whether the robot was working with light or heavy objects. The results are described in two patents (here and here) and a paper.

Thinking back over your own concept of robotics, what did you hope robots would do in “the future”?

At the time I started, the big push was to make robots useful in manufacturing. Ideas like autonomous cars and household robots were science fiction, although we could see the way forward.

What we did not realize was how much computation would improve. My cell phone is now far more powerful than the mainframe computer that I shared with several other graduate students at CMU. This makes many things possible, like practical computer vision and speech recognition and even those autonomous cars.

What are you working on now related to robotics?

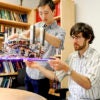

Graduate students Salomon Trujillo and John Ulmen, along with graduate research assistant Alan Asbeck, and Cutkosky observe the Stickybot III in a trial run on a glass surface in 2010. (Image credit: L.A. Cicero)

Most of the current work in my lab centers around bio-inspired robots that interact physically with their environments – climbing, sticking, perching, grasping, pushing. For this we develop new hands, legs, gecko-inspired adhesives, sensors, etc.

Robotics often starts with what I call “no touch” applications, like quadrotors that fly around and take pictures but don’t interact physically with the world, or mobile robots that wander around hallways and try not to bump into things. In comparison, animals are very good at exploiting interactions with the environment. As robots move out of relatively “sterile” environments like manufacturing and into the unpredictable world at large, they need to do the same.

How have the big-picture goals or trends of robotics changed in the time that you’ve been in this field?

The single biggest change in the 30-plus years that I’ve been involved in robotics is computation. This has had an enormous effect on computer vision, signal processing such as for tactile sensing, machine learning and control. It has allowed us to explore applications that were previously out of reach and we have learned many new things about physical interaction and control along the way.

Another big effect has been the drop in the cost of sensing. We owe this effect largely to the smartphone industry. Every smartphone contains many kinds of sensors, all very cheap and high quality. These same sensors, and the chips used to process their information, are now available for robots.

A third change, which I think we’re just starting to see the importance of, comes from new fabrication processes that lets us 3D-print combinations of hard and soft materials on demand. We can now make “soft” robots that are more responsive and much safer to work around than the hydraulic monster in my photograph.

It’s clear that robotics has grown enormously. There is huge interest from our students. Many new applications seem to be within reach that looked like science fiction a couple of decades ago.